In 2006, I argued that privacy needs to be the default — not a setting, not a policy, but the structural starting point for any connected system. Twenty years later, the argument still stands. But situation does not.

2006 — a designer's dilemma

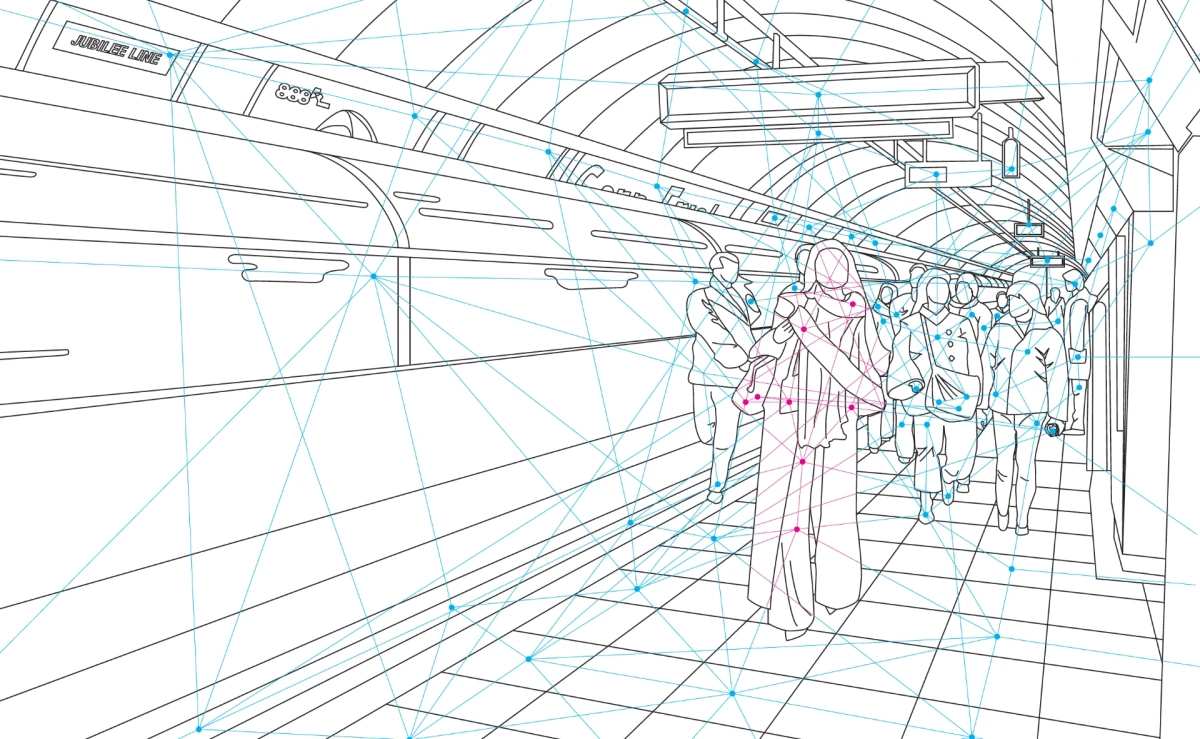

The starting point was an irritation. Connected technology was spreading fast and were about to embedded into everything: RFID, wireless networks, the early internet of things. Nobody in the design world was asking what it meant for privacy. Me neither initially, when I proposed my thesis topic. I wanted to create smart solutions for the car traffic problem in cities. But soon I realized what this new Internet of Things would mean for people. The loss of control about their privacy. I couldn't find anything, that would give me anwers to these questions, so I started answering them myself and came up with my "Concept for Privacy in a World with Ubiquitous Computing", what became my graduation thesis.

The core argument: in a world where technology becomes invisible, data collection becomes invisible too. Privacy can't be a feature to activate or a policy to read — it has to be the structural default. You depart from it consciously when you choose to share, not when you forget to protect.

A senior researcher at IDEO told me shortly after: "We're looking at this too, but nobody in business or politics is ready for it." I believed her. The thesis went into a drawer.

Download my thesis from 2006:

"Privacy as Default. Privacy by Default!" [German, 2MB, PDF].

Published under a Creative Commons Attribution license.

2016 — Curiosity revived the cat and Consumer IoT made it tangible for a brief moment

In 2016 my friend Konstantin Weiss co-chaired the EuroIA Summit and asked me out of curiosity to pitch a talk about my thesis — and I did successfully. I presented the reviewed version at EuroIA in Amsterdam and several other conferences followed. Some understood the importance immediately. Most still saw it as a future problem.

Most valuable for me: During my new research I found that Ann Cavoukian, Privacy Commissioner of Ontario, had developed a similar framework for tech in general — she called it "Privacy by Design". You hopefully have heard about it by now. It was a relief to see it applied and not being dead in the drawer like my concept.

Shortly after, I worked for 18 months as UX designer on V-Home, Vodafone's smart home product (discontinued in 2021). Working from the inside showed how brittle the tech still was and how hard it was to keep the privacy of users when there was no overarching framework and governance. But the consumer IoT hype didn't last long and so I moved on.

Slidedeck of my talk at the IA Summit 2017:

"Privacy by Default" [English, 6MB, PDF].

Published under a Creative Commons Attribution Non-Derivative license.

2026 — Governance is here now, but tech has moved forward again

Privacy by default is now law in Europe. GDPR enshrined the principle in 2018. The argument won. The problem didn't.

In 2006, I was worried about invisible technology collecting data without people noticing. That threat is now baseline. What's been added is a layer of systems that don't just collect, they act. Agentic AI operates in that invisible layer now. It doesn't just know things about you. It decides things, on your behalf, without your awareness. And it also get's close. It's about to vanish as technology, but just become part of our daily lifes — so comfortable, so comforting, that we just forget to care, what happens with us and our data.

It's a good thing to live in the EU right now, as here regulations took up the fight. But laws will always be behind the frontier technologies and AI is moving so fast, that it's up to those who build products upon this technology to decide about the privacy and safety of their users. It's more urgent than ever, but protecting the privacy of the user's have never been of lesser interest as well, as the machines need to feed on the personal information.

I'm curious, where we will stand in 10 years. I know, what I still stand for.