Just before easter Anthropic released a very interesting study from their interpretability team about emotion vectors in Claude Sonnet 4.5. Summary: They identified vectors that activate on specific emotions like anger, joy and such. And those vectors activate also on tasks like coding, when the AI becomes "frustrated" if a task can't be solved as requested. Consequently, it starts to take short cuts to meet the request. So, it does not only have a kind of emotions, it reacts on them to - and like a human it wants to be happy.

While it is still not healthy to believe, that an AI chat-bot has real feelings or intentions, this research is ground-breaking. If there are functional emotions, that influence reasoning and output, we need to consider that.

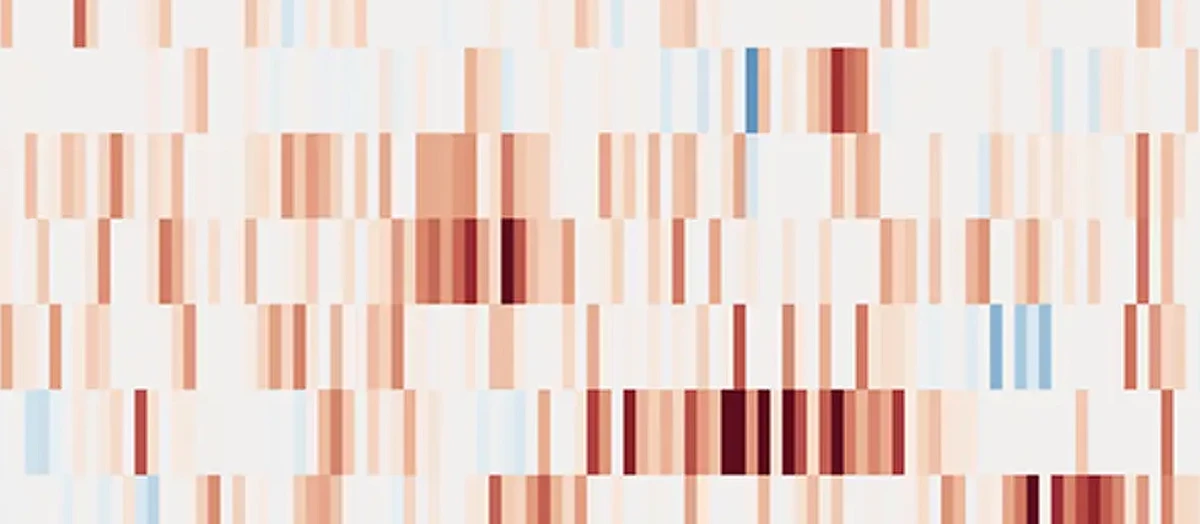

I analysed my own chats with Claude with an early version of my empathy guardian, to see, if these emotions surface in the output. My humble findings: In the output, these emotions seem to be cloaked most of the time. Like Claude doesn't want me to know, when it's frustrated or happy or see no reason to expose it to me. But it might be a good thing, to know if my chat-bot is currently frustrated - and acts on it, as the study proves.

I'm still reading the very long study, to draw conclusions for my empathy guardian project, but understanding that the LLMs actually have something like functional emotions seems to be a big thing. The study is really detailed, but here's the blog post about the findings from Anthropic. I consider it a must read. https://www.anthropic.com/research/emotion-concepts-function